Load balancing is a critical component for ensuring the performance and availability of services and applications in high-demand environments. Among the various load balancing algorithms, Round Robin Load Balancing stands out for its simplicity and efficiency in distributing traffic across servers.

In this article, we’ll explore how Round Robin Load Balancing works, its key benefits, and how to implement it effectively to enhance the reliability and performance of your infrastructure.

Contents

What is Round Robin Load Balancing?

Round Robin Load Balancing is a widely used technique for evenly distributing network traffic among multiple servers. Its simplicity and effectiveness in optimizing available resources make it one of the most popular algorithms for load balancers. By distributing the load evenly, Round Robin improves system performance and availability, preventing individual servers from becoming overwhelmed.

How the Round Robin Algorithm Works

In load balancing, various algorithms are employed to determine how traffic is distributed across servers. These algorithms play a crucial role in achieving effective load balancing. Some commonly used load balancing algorithms include Round Robin, Weighted Round Robin, Dynamic Round Robin, and several others. The Round Robin algorithm follows a cyclic pattern where incoming requests are assigned sequentially to the available servers. As each new request arrives, it is directed to the next server on the list, and the process repeats from the first server after reaching the last one. This ensures that requests are distributed fairly among all servers, preventing any single server from being overloaded.

Imagine a scenario with three servers: Server A, Server B, and Server C. The first request is sent to Server A, the next to Server B, then to Server C, and the cycle starts over with Server A. This process ensures each server receives a similar number of requests, maximizing resource efficiency and maintaining a balanced workload across the system.

This approach is particularly effective when servers have similar capacity and response times. In such cases, each server handles an evenly distributed load, improving resource utilization and preventing any single server from being overburdened while others remain idle.

Benefits of Round Robin Load Balancing

Round Robin Load Balancing offers several key advantages for traffic management and system performance:

- Equal Traffic Distribution: Traffic is evenly distributed among servers, optimizing system performance.

- Simplified Maintenance: Servers can be taken offline for maintenance without disrupting overall service. The load balancer automatically redirects requests to active servers.

- Improved Performance: By balancing the load, response times are reduced, and system efficiency is enhanced.

- Flexible Scalability: Adding new servers is straightforward, and the algorithm continues to distribute traffic effectively without requiring complex adjustments.

Overall, Round Robin Load Balancing is a fundamental technology in server management that significantly contributes to the efficient utilization of resources, optimized performance, and improved reliability of enterprise systems.

Load Balancing Algorithms

Load balancing algorithms are designed to evenly distribute incoming requests among the available servers in a server cluster. By doing so, these algorithms optimize resource utilization, improve response times, and ensure high availability of applications.

Round Robin Algorithm

The Round Robin Algorithm is one of the simplest and most widely used load balancing algorithms. It operates on a cyclic algorithm, where each server in the pool is sequentially assigned the next request. Once the last server is reached, the cycle starts again.

By evenly distributing requests among servers, the Round Robin Algorithm ensures that no server is overloaded while maintaining a fair distribution of the workload. However, it does not take into account server health or performance metrics, which can lead to suboptimal results in certain scenarios.

Weighted Round Robin Algorithm

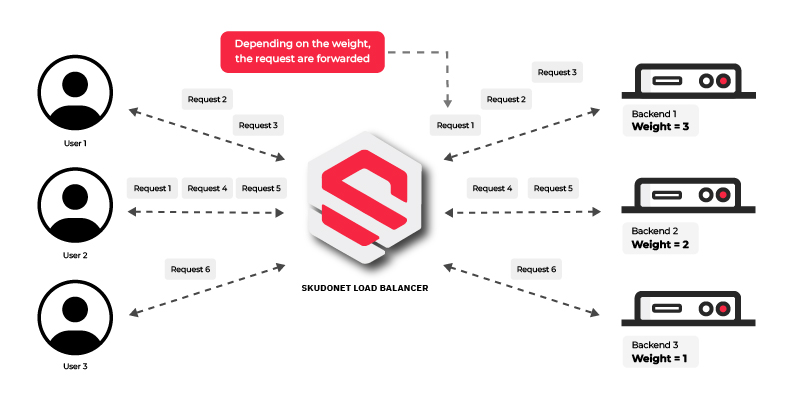

Building upon the Round Robin Algorithm, the Weighted Round Robin Algorithm introduces the concept of assigning different weights to servers based on their capabilities. Servers with higher weights receive a larger proportion of requests, effectively proportioning the workload according to their capacity.

This algorithm allows administrators to allocate more resources to high-performance servers, ensuring optimal utilization of available resources. However, it requires manual configuration and may become challenging to manage as the server pool grows larger.

Dynamic Round Robin Algorithm

The Dynamic Round Robin Algorithm takes load balancing to the next level by considering the real-time performance and health metrics of servers. Instead of blindly assigning requests in a fixed order, this algorithm adjusts the distribution based on the server’s current capacity and health status.

With the Dynamic Round Robin Algorithm, underperforming or overloaded servers receive fewer requests, while healthy servers can handle more significant portions of the workload. This adaptive approach optimizes resource utilization and ensures optimal performance for users.

Commonly Used Load Balancing Algorithms

Other load balancing algorithms that are commonly used include:

- Least Connections: This algorithm directs new requests to the server with the fewest active connections, minimizing the chances of overloading a server.

- IP Hash: The IP Hash algorithm uses the client’s IP address to determine the server to which the request should be routed. This ensures that requests from the same client are consistently sent to the same server, which can be beneficial in certain scenarios.

- Least Time: The Least Time algorithm directs requests to the server with the lowest response time, aiming to provide users with the fastest possible response.

- Source IP Affinity: Also known as Sticky Session or Session Persistence, this algorithm ensures that requests from the same client are always routed to the same server, maintaining session state and ensuring consistency.

Each load balancing algorithm has its own strengths and weaknesses, making it crucial for system administrators to choose the most appropriate algorithm based on the specific requirements and characteristics of their server environment.

Round Robin Load Balancing with SKUDONET

SKUDONET is an advanced open source load balancing platform that enhances the Round Robin algorithm for enterprise environments through an advanced weighted approach. It leverages the Weighted Round Robin Algorithm, which adjusts traffic distribution based on the actual performance of servers.

With this system, more powerful servers handle a larger share of requests, while less capable ones handle fewer, ensuring efficient balancing and preventing overloading.

Why Choose SKUDONET for Your Round Robin Needs?

There are several reasons why SKUDONET is the ideal choice for businesses looking to implement Round Robin Load Balancing:

- Optimized for High Performance: Our load balancer is designed to handle high traffic volumes without compromising performance.

Compatibility with Diverse Infrastructures: Works seamlessly in on-premises, hybrid, and cloud environments, adapting to various technological needs. - High Availability and Fault Tolerance: SKUDONET ensures continuous availability through advanced load balancing and failover techniques.

- Customization: Tailor the solution to meet your company’s specific needs with fine-grained adjustments.

- Ease of Configuration: SKUDONET simplifies load balancing implementation with an intuitive interface, reducing setup time.

Try SKUDONET Now

Round Robin Load Balancing is a highly efficient solution for evenly distributing traffic among servers, improving the performance, availability, and scalability of applications. With SKUDONET, this technique is taken to the next level, optimizing traffic management and ensuring high availability.

Try SKUDONET Enterprise Edition with a free 30-day trial and discover how it can boost the efficiency and performance of your systems.